Jensen Huang has a bold prediction: every industrial company will become a robotics company. Whether or not that timeline plays out exactly as he envisions, the list of robotics organizations building on NVIDIA’s platform makes clear that the company has already established itself as a central player in a transformation that is genuinely underway.

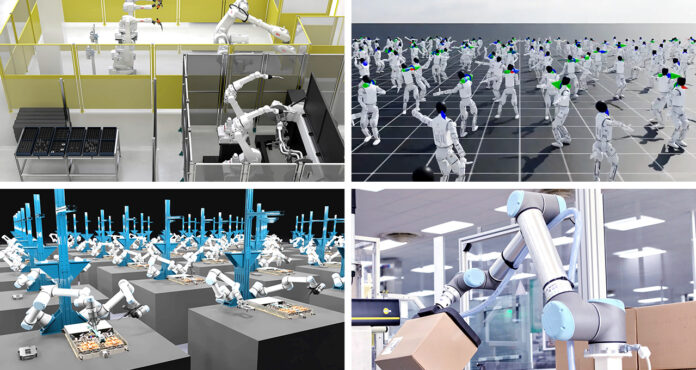

At GTC this week, NVIDIA announced a sweeping set of new tools, models, and partnerships across the robotics industry, covering everything from factory automation giants managing millions of deployed robots to startups building humanoids from scratch to surgical robotics companies operating under the strictest safety requirements in any industry. The breadth of what was announced is significant, and so is the consistency of the underlying strategy: build a full-stack platform, open the models, and let the ecosystem build on top.

The Industrial Giants Are Building Digital Twins First

The most immediately commercially significant part of the announcement involves the largest robotics companies in the world. FANUC, ABB Robotics, YASKAWA, and KUKA collectively operate a global install base of more than two million robots. That is not a future projection. Those robots are deployed in factories today, and the challenge these companies face is validating and optimizing increasingly complex AI-driven applications for systems that are already running in production.

All four companies are integrating NVIDIA Omniverse libraries and NVIDIA Isaac simulation frameworks into their virtual commissioning solutions. The goal is physically accurate digital twins of production lines that engineers can use to design, test, and optimize robot applications before making changes to actual factory equipment. Getting it wrong in simulation is cheap. Getting it wrong on a production line is not.

To power real-time AI inference on those production lines once systems are deployed, all four companies are also integrating NVIDIA Jetson modules into their robot controllers. Jetson handles the edge AI processing that allows robots to respond intelligently to their environment without sending data to a remote server first.

Building Robot Brains That Work Across Different Hardware

One of the harder problems in robotics is building AI systems that generalize across different robot embodiments and tasks rather than having to be trained from scratch for each new application. FieldAI and Skild AI are both working on this using NVIDIA Cosmos world models for data generation and Isaac simulation frameworks to validate policies in simulation before deploying them on physical robots.

World Labs is using Isaac Sim to validate its generative world models, and Generalist AI is using Cosmos to explore generating synthetic training data. The common thread is using simulation to create the volume and diversity of training data that would be impractical to collect in the physical world.

To accelerate this further, NVIDIA announced Cosmos 3, which it describes as the first world foundation model to unify synthetic world generation, vision reasoning, and action simulation in a single model. The significance of that unification is that developers can use one model to generate synthetic environments, reason about what is happening in them, and simulate how a robot would interact with them, rather than stitching together separate tools for each task.

Humanoids Are Getting a New Set of Tools

Building humanoid robots requires solving problems that industrial robots mostly avoid: bipedal locomotion, dexterous hand manipulation, real-time balance correction, and the ability to operate in environments designed for humans rather than purpose-built for machines. The list of companies working on this with NVIDIA is long and includes some of the most prominent names in the space.

1X, AGIBOT, Agility, Agile Robots, Boston Dynamics, Figure, Hexagon Robotics, Humanoid, Mentee, and NEURA Robotics are all using Cosmos world models, Isaac Sim, and Isaac Lab to accelerate the development and validation of their humanoid systems.

The new tools announced to support this work include Isaac Lab 3.0, now in early access, which enables faster large-scale robot learning on DGX-class infrastructure. Isaac Lab 3.0 is built on the Newton physics engine 1.0 and the NVIDIA PhysX SDK, adding multiphysics simulation and improved support for complex, dexterous manipulation tasks. The multiphysics capability is particularly relevant for humanoids because realistic simulation of how a robot hand interacts with deformable objects, soft materials, or complex mechanical assemblies requires modeling multiple physical phenomena simultaneously.

For deployment on actual hardware, these systems run on the NVIDIA Jetson Thor robotic computing platform, which gives developers a consistent path from simulation training to real-world deployment.

On the model side, NVIDIA Isaac GR00T N1.7 is now available in early access with commercial licensing, bringing generalized robot skills including advanced dexterous control to production-ready deployments. AGIBOT, Humanoid, LG Electronics, NEURA Robotics, and Noble Machines are all adopting GR00T N1.7 for industrial humanoid deployments.

Huang also previewed GR00T N2 during his keynote, a next-generation robot foundation model built on a new world action model architecture based on DreamZero research. According to NVIDIA, it helps robots succeed at new tasks in new environments more than twice as often as leading vision language action models. It currently ranks first on both MolmoSpaces and RoboArena for generalist robot policies and is expected to be available by the end of the year.

Healthcare Robotics Has Joined the Platform

Healthcare is a newer but important front in the physical AI story, and the stakes are as high as they get. Deploying autonomous systems in surgical and clinical environments requires meeting safety and regulatory standards that are far more stringent than those governing factory automation.

CMR Surgical is using Cosmos-H simulation to train and validate robotic intelligence for its Versius surgical system before clinical deployment. Johnson and Johnson MedTech is using Isaac Sim and Cosmos-based post-training workflows to train and validate systems for the Monarch Platform for Urology. Medtronic is exploring NVIDIA IGX Thor to deliver mission-critical precision and functional safety in its surgical robotic systems.

The presence of three major medical device and surgical robotics companies in the NVIDIA ecosystem signals that the platform is being taken seriously in one of the most demanding application domains for physical AI. Getting a robot certified for use in an operating room is a fundamentally different challenge from getting one approved for a warehouse, and the fact that these companies are building on NVIDIA’s simulation and inference infrastructure rather than developing entirely proprietary stacks is a meaningful vote of confidence.

Real-World Deployments Are Already Happening

Beyond the platform and model announcements, several concrete deployments and partnerships illustrate where all of this is already translating into production use.

Skild AI is partnering with ABB Robotics and Universal Robots to deploy generalized robot intelligence across different industries and tasks, embedding a shared intelligence layer into widely deployed industrial systems so that manufacturers can extend automation into more dynamic and variable workflows without writing task-specific code for every new application. Separately, Skild AI is partnering with Foxconn on high-precision assembly for NVIDIA Blackwell production lines, with Foxconn’s AI-driven dual-arm manipulators handling some of the most complex manufacturing tasks in the semiconductor supply chain.

Lightwheel is codeveloping and calibrating the Newton physics engine alongside Samsung to enable assembly robots to master cable handling in simulation, delivering higher precision and faster assembly.

PTC is announcing a new robotics design-to-simulation workflow connecting its cloud-native Onshape CAD platform to NVIDIA Isaac Sim. The integration creates a CAD-to-OpenUSD bridge that allows engineering teams including FANUC America and Fauna Robotics to design and validate robotic systems inside physically accurate digital twins without exporting between incompatible formats.

WORKR is integrating its AI platform with ABB Robotics industrial robots using NVIDIA Omniverse libraries as part of its WorkrCore system. The goal is to enable small and medium-sized manufacturers to deploy a robotic workforce in minutes without requiring programming knowledge, which addresses one of the most significant barriers to automation adoption outside large enterprise environments.

KION Group is working with NVIDIA and Accenture on autonomous warehouse solutions, using Omniverse and a physical AI-powered digital twin to train and test fleets of NVIDIA Jetson-based autonomous forklifts for GXO, the world’s largest pure-play contract logistics provider. The physics-accurate warehouse digital twins allow KION engineers to simulate large-scale deployments before putting hardware on an actual warehouse floor.

On the cloud infrastructure side, Microsoft Azure and Nebius are integrating the NVIDIA Physical AI Data Factory blueprint for scalable synthetic data generation. CoreWeave is integrating Isaac Lab to build robot learning pipelines. Alibaba Cloud is integrating the full NVIDIA physical AI stack into its Platform for AI to support end-to-end robotics development.

And then there is Disney. The Kamino physics simulator, built by Disney on the NVIDIA Warp framework and integrated into Newton, is being used to train robot policies for Disney’s Olaf character and BDX Droids. The training has allowed Olaf to learn to manage its own thermal output and reduce impact noise, and enabled BDX Droids to navigate complex environments. Olaf is set to debut at Disneyland Paris on March 29, and Huang was joined by the robot during his GTC keynote.

The Open Source and Startup Angle

NVIDIA is also making a deliberate effort to extend the physical AI platform to developers beyond the large enterprise tier. Through the NVIDIA Inception program, which has more than 40,000 member startups, NVIDIA provides robotics companies with access to its open physical AI stack, technical guidance, high-performance computing resources, and connections to partners and customers across the ecosystem. Current Inception members working in robotics include Bedrock Robotics, Dexterity AI, Flexion, Lightwheel, RIVR, Standard Bots, Vention, and World Labs.

NVIDIA has also partnered with Hugging Face to integrate Isaac and GR00T into the LeRobot open source framework. The integration connects NVIDIA’s two million robotics developers with Hugging Face’s 13 million AI builders, creating a larger shared community around open source robotics development.

What This All Adds Up To

The scale and diversity of what NVIDIA announced for robotics at GTC this week is genuinely impressive, and it reflects a company that has been building its physical AI platform systematically for several years rather than scrambling to catch up to a trend.

The strategy is the same one NVIDIA has used in other domains: establish the platform, open the models, build the ecosystem, and make it progressively harder for developers and companies to justify building on anything else. In robotics, that strategy appears to be working. The combination of industrial automation giants, humanoid pioneers, surgical robotics leaders, cloud providers, and startups all building on the same underlying stack is the kind of ecosystem density that is difficult to replicate once it reaches a certain scale.

The harder question is execution. Physical AI involves deploying systems in the real world where the consequences of failure are tangible and sometimes serious. The simulation tools, foundation models, and platform integrations NVIDIA is providing can accelerate development significantly, but they do not eliminate the fundamental difficulty of making robots that work reliably in complex, unstructured environments. The announcements from GTC tell us where the industry is investing and what the roadmap looks like. The factories, hospitals, and warehouses where these systems eventually run will tell us whether the investment is paying off.

Discover more from SNAP TASTE

Subscribe to get the latest posts sent to your email.