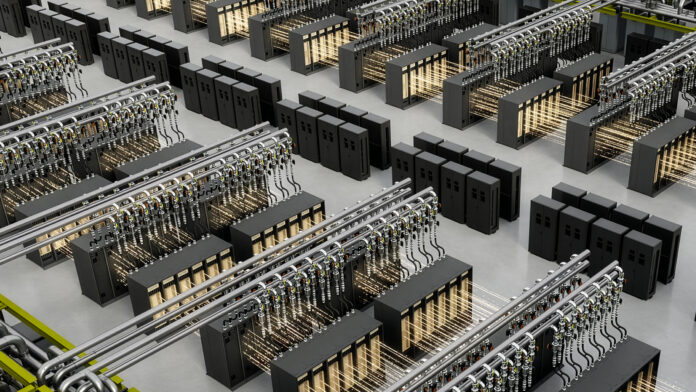

Building a large-scale AI factory is one of the most complex infrastructure challenges in the world right now. You are not just buying servers and plugging them in. You are coordinating thousands of GPUs, massive power delivery systems, sophisticated cooling infrastructure, high-speed networking fabrics, and software stacks that have to work together perfectly from day one. Get any of it wrong and you are looking at delayed deployments, wasted energy, and millions of dollars in underperforming infrastructure. NVIDIA thinks it has a better way to approach this, and it involves simulating the entire thing before construction even begins.

The company has announced the NVIDIA Vera Rubin DSX AI Factory reference design alongside the general availability of the NVIDIA Omniverse DSX Blueprint. Together, they form a comprehensive framework for designing, building, simulating, and operating large-scale AI factories, and a significant portion of the infrastructure industry has already signed on to support them.

What the Reference Design Actually Does

The Vera Rubin DSX AI Factory reference design is essentially a detailed blueprint for how to build an AI factory the right way. It covers the full infrastructure stack, spanning compute, Spectrum-X Ethernet networking, and storage, and it documents best practices for designing and operating power, cooling, and control systems in a way that enables seamless hardware and software integration from the start.

The goal is repeatability and scalability. Rather than having every organization figure out from scratch how to codesign these systems for maximum performance, NVIDIA is providing a reference that captures the knowledge of how all these components interact and how to extract the most tokens per watt out of the available energy budget. That last part matters enormously right now. Power is one of the most constrained resources in AI infrastructure, and squeezing more compute out of every watt directly affects both operating costs and how quickly a facility can scale.

The software stack that sits on top of the reference design is built to be open, modular, and composable. It connects cluster hardware with power and cooling systems and provides several distinct software libraries that address different aspects of the optimization problem.

DSX Max-Q helps AI factories maximize computing output and token performance per watt within a fixed power budget. DSX Flex connects AI factories to power grid services and enables dynamic adjustment of power consumption, coordinating with onsite generation to reduce energy costs and help maintain grid stability. DSX Exchange handles integration of compute, network, energy, power, and cooling signals between IT systems, operational technology, and operations agents. DSX Sim models validate designs as high-fidelity digital twins, using the NVIDIA DSX Air platform to model GPUs, networking, and partner infrastructure before anything is physically deployed.

The Digital Twin Part Is Where Things Get Interesting

Even with a solid reference design in hand, the actual process of designing and building a large AI factory is extraordinarily difficult. Traditional design methods struggle to model entire systems holistically, and they offer limited ability to validate designs before construction begins. The consequences of getting something wrong at the design stage are severe, because fixing it after the fact is expensive and time-consuming.

The NVIDIA Omniverse DSX Blueprint addresses this by providing a framework for building physically accurate digital twins of AI factories. It is now generally available on build.nvidia.com and is fully compatible with the Vera Rubin DSX reference design. Using the blueprint, organizations can simulate their entire AI factory in software, including layouts, power topologies, thermal behavior, and operational policies, and evaluate the impact of hardware or workload changes without touching the physical system.

This is not a simple visualization tool. Omniverse DSX unifies power, cooling, networking, and operations in a single simulation environment, which means the interactions between all of those systems can be modeled together rather than in isolation. Thermal changes affect power draw, power draw affects cooling requirements, cooling capacity affects how densely GPUs can be packed, and all of those relationships can be explored in simulation before a single piece of hardware is ordered.

Jensen Huang, founder and CEO of NVIDIA, described the significance of the infrastructure investment in terms that reflect how central AI factories have become to the broader technology economy, noting that intelligence tokens are the new currency and that AI factories are the infrastructure generating them. The reference design and blueprint, in his framing, are about maximizing the productivity of that infrastructure and getting to first revenue as quickly as possible.

The Partner Ecosystem Is Enormous

One of the most striking things about the DSX announcement is how broad the partner involvement already is. This is not NVIDIA releasing a reference design and hoping the industry catches up. Major players across infrastructure, software, energy, and construction have already integrated their platforms into the DSX ecosystem.

On the infrastructure and engineering software side, Dassault Systèmes is integrating the reference design and blueprint into its Model Based Systems Engineering platform, powered by CATIA, to build what it calls a Virtual Twin of AI Factory. Schneider Electric is integrating its ETAP platform to help users simulate and optimize power distribution systems. Cadence is integrating SimReady models of the NVIDIA GB300 NVL72 system into its Reality Data Center Digital Twin Platform to simulate thermal and fluid dynamics for AI factory design. Siemens is developing a framework to balance high-density compute with power, cooling, and automation for AI infrastructure.

Jacobs has built a new Data Center Digital Twin solution using the Omniverse DSX Blueprint, offering digital twins for builders and operators to optimize AI factories from early planning through ongoing operations. PTC is integrating the blueprint into its Windchill product lifecycle management solution for what it calls the DSX Accelerator, connecting engineering and product design data with high-fidelity real-time simulation. Procore is integrating NVIDIA Omniverse libraries and the DSX Blueprint into its construction management platform to create a continuous digital thread across the full construction lifecycle.

Switch is building its EVO AI Factories and LDC EVO operating system with the Omniverse DSX Blueprint, using it for real-time telemetry ingestion and continuously updated digital twins built to Rubin DSX specifications. CoreWeave is using NVIDIA DSX Air to build and test digital twins of AI factories entirely in the cloud, which it says has significantly shortened validation time by enabling operational rehearsals well ahead of physical delivery.

On the hardware simulation side, Eaton, Schneider Electric, Siemens, Trane Technologies, and Vertiv are all providing SimReady assets of their generators, electrical equipment, and cooling systems so that AI factory designers can simulate and validate complete facility designs in software before committing to physical construction. Vertiv is taking this further by using the Omniverse DSX Blueprint to build Vertiv OneCore Rubin DSX, a prefabricated converged data center infrastructure solution designed to accelerate AI factory deployment. Trane Technologies is using the blueprint to optimize thermal management for gigawatt-scale facilities, targeting reductions in cooling plant power use and overall efficiency improvements.

Phaidra has integrated DSX Max-Q into a self-learning AI agent that it says delivers approximately 10 percent more compute by reducing cooling spikes while maintaining safety margins and freeing up power for revenue-generating token production. That is a meaningful improvement at the scale of a large AI factory, where cooling overhead can represent a significant fraction of total power consumption.

The Energy Problem Is Real and Getting Worse

One section of the announcement stands out as particularly urgent: the energy situation facing AI infrastructure buildouts right now is severe. NVIDIA says there are over 300 billion dollars in equipment backlogs and more than 200 gigawatts of projects waiting in US interconnection queues. The demand for AI compute is outpacing the ability of the power grid to support it, and that gap is not closing quickly.

NVIDIA is addressing this through DSX Flex and a set of partnerships with major energy companies. Emerald AI is integrating DSX Flex with its Conductor platform to help AI factories manage power in real time, turning demand up or down on command and coordinating flexible load with dedicated generation. The goal is to give utilities greater confidence to approve larger and faster grid connections by demonstrating reliable software-based load control.

GE Vernova is extending digital twin capabilities across the full power stack from the grid to the AI factory itself, aligning with the NVIDIA DSX reference architecture to unify power and compute modeling for faster and more predictable infrastructure deployment. Hitachi is partnering with NVIDIA to accelerate grid planning and deliver efficient, reliable power for gigawatt-scale AI factories, combining its power systems and automation expertise with NVIDIA computing platforms. Siemens Energy is using NVIDIA RAPIDS libraries, the NVIDIA Metropolis platform, and the NVIDIA Isaac Sim framework in its Noedra digital twin platform to monitor grid health in real time and predict risks before they become failures.

What Is Actually Getting Built

Some of the most concrete evidence of where all this is heading comes from the deployments already underway. Nscale and Caterpillar are using Vera Rubin DSX reference designs to build a multi-gigawatt AI factory site in West Virginia that NVIDIA describes as one of the largest AI factories in the world. That project alone illustrates the scale at which these reference designs and simulation tools are being applied.

Why This Matters Beyond the Technical Details

The DSX announcement reflects something important about where the AI infrastructure industry is in its maturity. The early days of AI infrastructure were characterized by rapid experimentation, custom builds, and a lot of expensive lessons learned through trial and error. The industry is now large enough, and the stakes are high enough, that a more systematic and engineered approach has become necessary.

Reference designs, digital twins, and simulation-based validation are the tools of mature engineering disciplines. The fact that NVIDIA is introducing them for AI factories, and that a broad ecosystem of established engineering and infrastructure companies is embracing them, suggests that AI infrastructure is graduating from a frontier technology into something that operates more like a mature industrial system, with all the standards, tools, and best practices that entails.

Whether that translates into AI factories that actually get built faster and operate more efficiently is something the industry will find out over the next few years. But the direction is clear, and the seriousness of the partner commitments behind it suggests this is not just a reference design that sits in a documentation repository. People are already building with it.

Discover more from SNAP TASTE

Subscribe to get the latest posts sent to your email.